This article walks engineers through our learnings of building on-premises AI agents, drawing from our experience with an enterprise client project.

Most startups would pause and reconsider after hearing they need to set up the platform on an customer's physical servers and infrastructure, essentially "On-prem".

When this request came to us, we had two choices - either we delivered, or we would lose a big enterprise deal. For us, it turned into a weekend project.

Within two to three days, we had a working prototype on the customer's infrastructure. By Monday, our AI agents were handling workflows on their systems, with workflows fully visible in their monitoring tools and syncing updates in real time from our cloud to their on-prem setup. Seeing their reaction was rewarding. The conversation quickly shifted from "prove it" to "when can we go live?"

That experience confirmed what we had believed from the beginning - Enterprises want AI agents to run within their own systems. If you design for that from the start, what many call a "DevOps nightmare" can actually be solved in a weekend.

Why On-Prem Isn't Optional for Enterprise AI

Here's the reality most AI platform vendors don't want to talk about - when AI agents operate at the level of accessing internal APIs, executing workflows, and orchestrating multi-step actions across enterprise systems, the attack surface grows exponentially.

This isn't about a chatbot answering questions from a knowledge base. We're talking about agents that can trigger deployments, access customer data layers, interact with financial systems, and make decisions that have real business consequences. At that level of access, security isn't a preference. It's a hard requirement.

The mid-to-large enterprise we deployed for operates in the IT management space with a strong security-first culture and strict data governance requirements. They needed AI-powered workflow automation, including multi-agent swarms that interact deeply with their internal systems. But with that level of access, they needed full assurance that every agent action, every API call, every piece of data stayed entirely within their own infrastructure.

Not just the data at rest. Everything. The prompts. The responses. The logs. Every LLM call context. Zero data leaving the perimeter. Non-negotiable.

The Market Gap Nobody's Talking About

We evaluated the competitive landscape. Most AI platforms assume cloud access. They're built with open endpoints, external LLM calls routed through the vendor's infrastructure, data leaving the perimeter for processing. The architecture assumes you're okay with your sensitive data touching someone else's servers, even if just in memory, even if just for inference.

The enterprises we talk to? Their infrastructure was ready for AI. They had mature Kubernetes deployments, established DevOps practices, internal monitoring tooling, and strict network policies governing what goes in and out of their VPC. The problem wasn't their readiness. The problem was that nothing on the market was ready for their infrastructure.

The options were either open-cloud solutions suitable for small-scale development and coding exercises, or massive custom builds that would take a year and a dedicated team to operationalize. Neither worked for enterprises that needed production-grade AI agents running today, not next year.

Why This Matters Now

AI agents aren't just assistants anymore. They're operating at the system level. They're making decisions, triggering workflows, accessing APIs, and orchestrating actions across multiple services. When you give an agent that kind of power, you need two things in equal measure: security and observability.

Security without observability is a black box. You might feel safe because data isn't leaving your perimeter, but you have no idea what's happening inside. Observability without security means you can see everything, but you're constantly worried about where that visibility is being logged, who has access to it, and whether it's creating new attack vectors.

Enterprises don't just want powerful agents. They want agents they can fully own, fully audit, and fully see. That's the trust equation. And most platforms only solve half of it.

The "DevOps Nightmare" We Decided to Avoid

Let me be direct - on-premise deployment for AI platforms is notoriously difficult. It's widely regarded as a DevOps nightmare because of dependencies on third-party tools, managed cloud services, and external libraries that assume cloud infrastructure.

.png)

The complexity multiplies when you're deploying not just a single AI model, but a full agent orchestration layer where multiple agents can run concurrently, access different systems, and need to be individually traceable and auditable. Most companies that try to retrofit a cloud product for on-premise delivery spend six months to a year in the process. Some never ship it.

We went from initial request to production deployment in two to four weeks.

That timeline isn't luck. It's the result of an architectural bet we made from day one.

The Decision That Changed Everything

Adopt AI's leadership had the foresight to anticipate that when enterprises adopt AI agents, they'd want those agents running inside their own walls. Not as an afterthought. Not as a "premium tier." As the default model for any organization serious about security.

So from the earliest days of building the platform, we made specific choices:

- Open-source, cloud-agnostic libraries - No hard dependencies on managed cloud services that won't exist in a customer's data center.

- Modular, isolated systems - Each component can be packaged, deployed, and scaled independently.

- Observability from the start - Not bolted on later, but designed into the architecture as a first-class concern.

There was no "rip and replace" moment when enterprise demand came. The platform was already built for it. We just had to package and ship.

What This Actually Means Technically

We used open-source self-hostable components wherever possible. We kept systems isolated to facilitate clean packaging and easier customer deployment. We avoided the temptation to use convenient managed services that would create deployment headaches later.

When the request came in late November, we had the core functionality working within four to five days. The customer had agents running on their development instance, executing workflows, with full traceability. The subsequent month was spent hardening the deployment, adding enterprise-grade monitoring hooks, and building out the self-serve portal experience.

If we'd built for cloud first and tried to reverse-engineer an on-prem package later, we'd still be working on it. That's where the real nightmare begins.

What We Actually Built

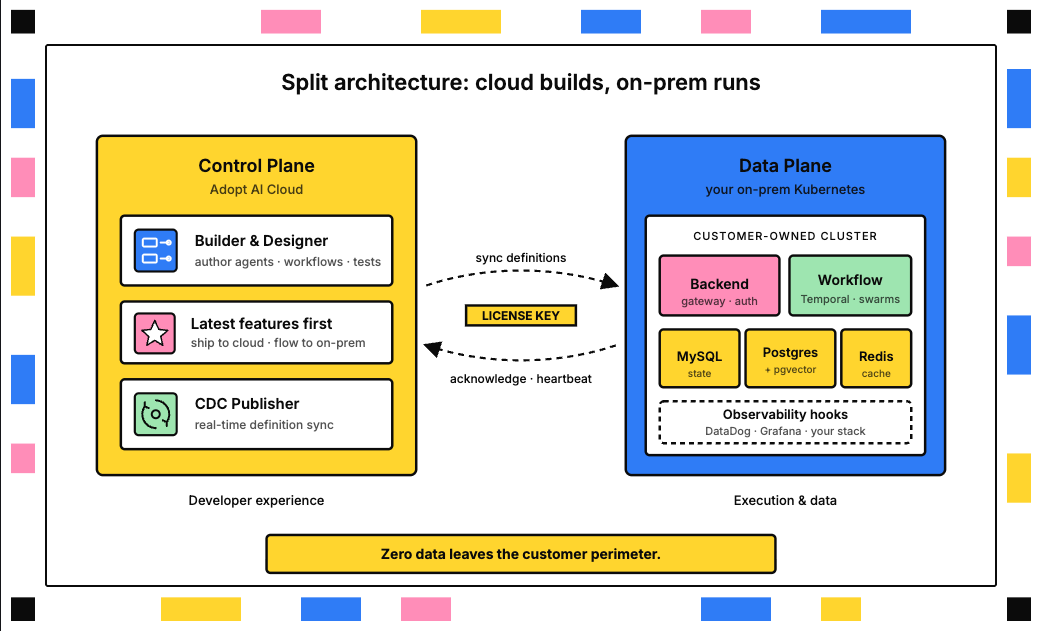

The Adopt AI platform deploys as a split architecture: the Data Plane runs on-premise within the customer's infrastructure, and the Control Plane runs on Adopt AI's Cloud.

The On-Prem Data Plane

Everything that touches customer data, executes agent workflows, or processes sensitive information runs entirely within the customer's Kubernetes cluster:

- Adopt AI Backend: API gateway, authentication, and routing layer

- Workflow Services: AI agent orchestration powered by Temporal

- MySQL: Primary data storage

- PostgreSQL with PG Vector: Analytics and embeddings

- Redis: Caching layer

The customer owns and operates all of this. We have zero access to their environment. No backdoors, no admin overrides, no "emergency access" provisions. If they want us to never see their data, we never see their data.

The Three Pillars

The architecture delivers on three critical requirements that enterprises told us they couldn't compromise on:

1. Complete Data Sovereignty

Every agent interaction, every workflow execution, every encryption key, every log stays within the customer's infrastructure. The prompts their users send, the responses the agents generate, the context windows, the tool calls - all of it remains in-house.

We designed the system so that Adopt AI has no visibility into what's happening inside the customer's environment. Not because we don't want to help them succeed, but because they need the confidence that their data is theirs alone.

2. End-to-End Observability

Here's where we bust the biggest myth about on-prem: that it means flying blind.

Every agent action in the Adopt AI platform is fully traceable. From trigger to execution to response, the customer can see exactly what happened, why it happened, what data was accessed, and how the decision was resolved. That telemetry integrates natively with whatever monitoring tools they already use - DataDog, Grafana, or any standard logging infrastructure.

This isn't just for peace of mind. It's for compliance audits, security reviews, and internal debugging. When an agent does something unexpected, the customer's team can trace the entire decision path without calling us. When they need to prove to an auditor that sensitive data was handled correctly, the logs are right there in their own systems.

You don't trade security for insight. You get both.

3. Self-Serve Deployment and Management

We built an enterprise portal that serves as the single pane of glass for the customer's DevOps team. They pull Docker and Helm images, download YAML configuration files, input their own credentials, and deploy with one command.

Updates work the same way. When we release a new version, they run a single Helm upgrade command. No coordination calls, no deployment windows with our engineering team, no dependency on our availability.

And when something goes wrong? The customer generates a support bundle - a privacy-preserving package of only the necessary logs (error logs, system state, relevant telemetry) - and uploads it through the enterprise portal. Our engineers can review the logs and diagnose the issue without ever touching customer data or accessing their environment.

The entire lifecycle - deploy, configure, update, troubleshoot - is completely self-serve and seamless.

The Challenge That Evolved: MCP Support

One area that came up during the process was support for Model Context Protocol (MCP) servers. As the AI ecosystem matures, enterprises increasingly need their agents to interact with external tools and services through standardized protocols. That expectation carries into the on-prem environment.

The challenge was ensuring MCP connectivity worked reliably within a locked-down, self-hosted setup where network policies are strict and every external connection is scrutinized. We adapted the architecture to support MCP integrations within the customer's security boundaries, giving their agents the ability to connect to approved tools and services without compromising the isolation of the on-prem environment.

It was a good reminder that on-prem doesn't mean closed off. It means the customer controls what comes in and out.

The Real-Time Sync Innovation

One of the most powerful features we built is Change Data Capture (CDC) - a real-time syncing mechanism that automatically pushes actions or changes built on Adopt AI's cloud platform back to the customer's on-premise system.

Here's how it works in practice:

The customer's team builds AI agents and workflows using Adopt AI's cloud interface. That's where they have access to our full builder experience, the latest features, the workflow designer, the testing environment. It's the fastest way to develop.

But when those agents run, when they execute workflows, when they touch customer data - all of that happens on-premise. The CDC layer syncs the agent definitions from cloud to on-prem in real-time, so there's no manual export/import cycle, no version drift, no deployment lag.

The customer gets the best of both worlds: the speed and convenience of cloud-based development with the security and control of on-premise execution. A license key facilitates the secure data syncing between the two environments.

This two-instance architecture - cloud for building, on-prem for running - is what makes the platform practical for enterprise teams. They don't have to choose between developer experience and security posture. They get both.

What This Unlocks for Enterprises

When the customer's team saw the full system working - agents running on their infrastructure, fully traceable in their monitoring stack, with CDC syncing in real-time - the reaction was genuine surprise.

"Wait, that's it? It's already working?"

They'd gone through evaluations with other vendors where just the scoping conversation took longer than our entire deployment. The moment of disbelief turning into confidence is exactly what we want every enterprise customer to feel.

Measurable Impact

The results speak for themselves:

- Complete data sovereignty without trading away observability

- Compliance audits and security reviews made significantly easier because every agent action is documented and traceable in their own systems

- Deployment updates reduced to a single Helm upgrade command

- Time-to-deploy measured in weeks, not months or quarters

But beyond the metrics, there's something more fundamental: enterprises can now run a full swarm of AI agents - deeply integrated with their internal systems - with complete confidence that nothing leaves their perimeter, and complete visibility into everything the agents do.

Who This Is For

This solution is built for mid-to-large enterprises with:

- Security-first culture and strict data governance requirements

- Mature Kubernetes-based infrastructure already in place

- Need for AI agents that interact deeply with internal systems and APIs

- Compliance requirements that make cloud-only solutions non-starters

If your organization fits that profile, you already know that most AI platforms aren't built for you. They're built for startups and scale-ups where "move fast and break things" is still the operating model. For enterprises where breaking things means regulatory violations, customer trust violations, and security incidents, you need a different approach.

Lessons for Engineers Building Enterprise AI

If you're building an AI platform and enterprise is even remotely on your roadmap, here's what we learned:

1. Don't Treat On-Prem as an Afterthought

Make the architectural decisions early. Use open-source where you can. Keep your services modular and isolated. Avoid hard dependencies on managed cloud services that won't exist in a customer's data center.

Think about observability from the start, not as a bolt-on. Every action your agents take should be traceable by default, not as a premium feature you add later.

The biggest trap is building everything for the cloud and then trying to reverse-engineer it into an on-prem package two years later. That's where the real nightmare begins. By then, you have technical debt, you have cloud-specific assumptions baked into every layer, and you have a team that's optimized for cloud deployment velocity, not on-prem compatibility.

Start with the harder requirement. You can always simplify for cloud-only deployments later. The reverse is much harder.

2. It Takes a Team

This wasn't a solo effort. The deployment came together because we had clear ownership and tight collaboration:

- I led the end-to-end development and deployment

- Roshan built the UI, improved the observability layer, and managed all internal authentication and version matching for data schemas

- Rahul B, our CTO, provided architectural support and made key design decisions that kept the system deployable

Cross-functional execution matters. On-prem isn't just an engineering challenge. It's a product challenge, a DevOps challenge, and a customer success challenge all rolled into one.

3. What We'd Do Differently

Looking back, we'd invest even earlier in documentation and runbooks for the customer's DevOps team. The deployment itself was smooth, but we learned that the more self-serve material you give an enterprise team upfront, the fewer support cycles you need down the line.

We've since built that into the enterprise portal experience. New customers now get comprehensive setup guides, troubleshooting playbooks, and architecture diagrams before they even start the deployment. It's made a noticeable difference in how quickly teams can get productive.

Final Thoughts

Here's what I learned from this engagement - when it comes to AI agents in the enterprise, security and observability aren't separate concerns. They're two sides of the same trust equation.

Enterprises don't just want powerful agents. They want agents they can fully own, fully audit, and fully see. Building for that trust changes how you think about architecture from the ground up.

You can't bolt it on later. You can't promise "we'll add on-prem support in a future release" and then try to retrofit a cloud-native platform for customer data centers. The dependencies are too deep, the assumptions are too baked in, and the timeline stretches from weeks to quarters.

But if you start with the assumption that your most important customers will demand complete control over their data and complete visibility into what your agents are doing, you build differently. You choose different libraries. You design different interfaces. You think about deployment, monitoring, and troubleshooting from the customer's perspective, not just from yours.

That's what made the weekend sprint possible. That's what made the two-to-four-week deployment timeline real. And that's what makes enterprises look at our platform and say, "Finally, something built for us."

The combination of security, observability, and self-sufficiency is rare in the AI platform space. But it's not optional if you want to earn the trust of enterprises that are serious about deploying AI agents at scale.

We built it that way from the start. And when that customer walked in and said "I'll only buy if you can ship it on-premise," we were ready.

Want to learn more about Adopt AI's on-premise deployment? Contact our team to discuss your enterprise requirements.

Take three minutes to find out which side of that line you are on.

Browse Similar Articles

Find Your Agentic AI Readiness Score

Every enterprise thinks they are building toward Agentic AI. But only few actually are.

Take three minutes to find out which side of that line you are on.

.svg)

.svg)