Learn how enterprises use automated document processing to capture data, reduce manual work, and streamline workflows with AI.

TL;DR

- Treating ADP as a data extraction task is why most implementations fail. The goal isn't getting data out of a document. It's ensuring that data triggers the correct action in your ERP or financial system, every single time.

- Fixed pipelines break the moment a vendor changes an invoice layout or a regulator updates a form. Systems that can't reason about document variability in real-time will always need a human correction layer sitting on top of them.

- When a reviewer fixes an extraction error and that correction disappears into a ticket, the same mistake repeats next month. Human intervention needs to be a first-class execution state, not a dead end outside the pipeline.

- Confidence thresholds set at deployment drift silently as document types change. Without continuous validation against live master data, your system will start extracting the wrong data with high confidence and no alerts.

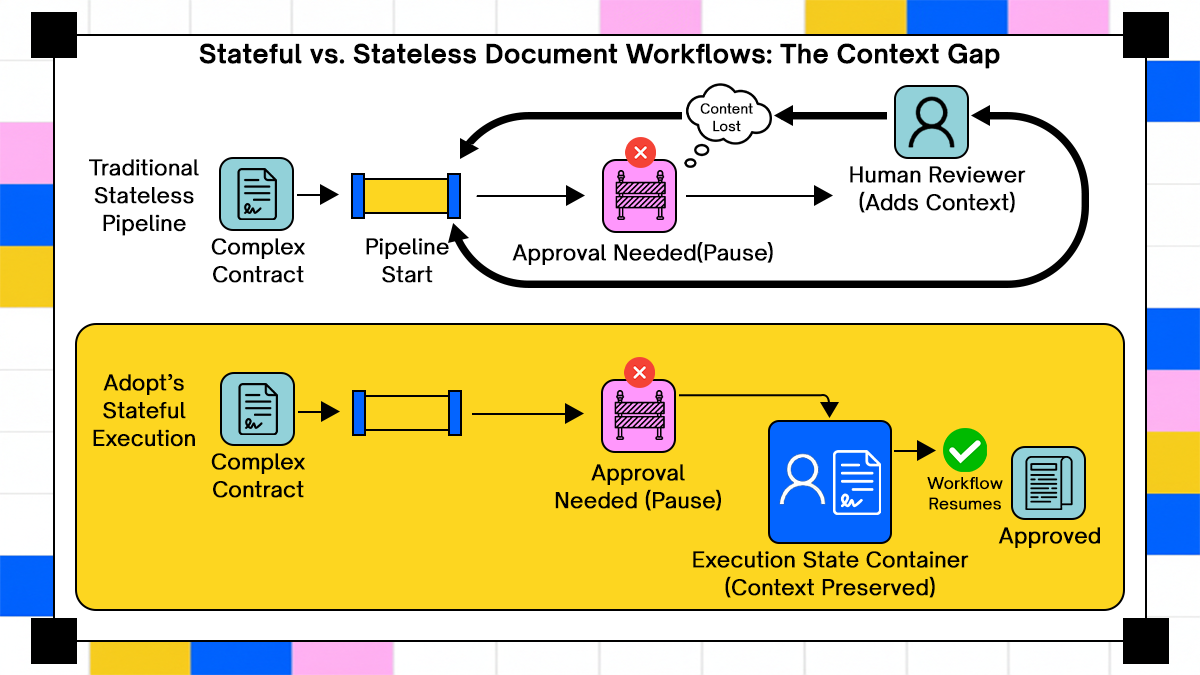

- Stateless pipelines lose the "why" behind every decision. Loan applications, contract negotiations, and claims that span weeks need stateful models that track version provenance and maintain audit trails across every pause and restart.

- Manually mapping fields to shifting ERP schemas is a losing battle. Systems that read the current API structure before attempting to write remove the risk that a "successful" extraction still fails because the destination changed.

The premise of automated document processing has always been simple: stop paying people to read the same kinds of documents repeatedly. Feed them into software, get structured data out the other side, and route that data into the systems that need it. Twenty years of investment in this idea should have made it a solved problem.

A global survey by the Institute of Financial Operations and Leadership (IFOL) found that more than 68% of businesses are still manually keying invoice data into their ERP and accounting software. The same survey found that the top two AP challenges were delays caused by exception processing and too much manual data entry, in that order. Those two problems are supposed to be the ones automation solves. They're also the ones it consistently doesn't.

The pattern shows up in practitioner communities too. Threads in r/sysadmin regularly surface the same complaint: teams implement document automation, watch it work for clean inputs, and then quietly build a correction layer on top for everything else. The automation is real, but so is the manual layer sitting on top of it.

The root cause isn't that the tools are bad but it's that most ADP implementations were scoped as extraction projects and deployed as pipelines. When the real problem is an execution problem: what do you do with the data once you have it, how do you handle the documents that don't conform, and what happens when a human has to step in halfway through? This article works through those questions from the architecture up.

What is Automated Document Processing (ADP) ?

Automated Document Processing is the use of software to ingest, classify, extract data from, validate, and route documents without requiring a human to read each one. That's the full scope. Any definition narrower than that is describing one component of the system, not the system itself.

The 'document' in ADP includes PDFs, scanned images, Word files, emails with attachments, EDI transactions, and structured forms. The processing pipeline has to handle all of them, often arriving from the same source on the same day.

What makes it 'automated' is that the pipeline executes without human initiation at each step, not that humans are removed entirely. Most mature ADP deployments still involve humans at exception and approval points. The question is whether those human touchpoints are designed into the execution model or bolted on as workarounds. That distinction matters more than the number of connectors or the accuracy of extraction.

Understanding what ADP is supposed to cover sets up the more important question: what does it actually cover in practice, and where does the gap start?

What Automated Document Processing Is Actually Supposed to Do

At enterprise scale, ADP covers six sequential stages. Most implementations only fund the first three.

1. Ingestion: receiving documents from all source channels

2. Classification: identifying document type before extraction runs

3. Extraction: pulling structured fields from unstructured content

4. Validation: checking whether extracted values are internally consistent and match business rules

5. Routing: sending the document and its data to the right next step

6. Downstream action: writing to ERP, triggering payment, filing compliance records

Most implementations stop at step three and call it done. The downstream routing and action steps are where process integrity collapses. Without execution context, extracted data is just a file in a queue. It doesn't know where it came from, what triggered processing, or what happens if the next step fails.

How ADP Differs From Basic OCR and Template-Based Parsing

OCR converts pixels to text. Template parsing maps known field positions to output fields. Neither handles variation, ambiguity, or schema drift. They're useful components but they don't constitute a processing pipeline.

Modern ADP layers classification models and LLM-based extraction on top of OCR, which handles clean and consistent documents well and degrades predictably on everything else. The degradation isn't random. It follows patterns: unusual layouts, handwritten annotations, scanned pages at odd angles, and table structures that span multiple pages all cause specific failure modes that show up in exception queues.

The real enterprise document environment is not consistent. Vendors change invoice layouts without notice. Contracts arrive in a dozen formats from six jurisdictions. Scanned paper from a 2008 acquisition appears in a 2025 compliance request. Any ADP architecture that assumes input consistency will spend a growing portion of its capacity handling the exceptions that assumption creates. The architecture that handles these inconsistencies, or fails to, is built from a few core components.

ADP Pipeline - Core Architectural Components

Every ADP pipeline is built from the same set of components, but how those components are connected, and what guarantees each one makes, determines whether the pipeline holds up at enterprise scale. Here's what each layer actually does and where each one typically breaks.

Ingestion and Classification: Where Garbage Enters

Documents arrive from multiple channels simultaneously, each with different quality and metadata problems:

- Email attachments arrive with inconsistent naming conventions and no guaranteed format

- Portal uploads come in whatever format the user chose, often without validation

- API pushes from third-party systems assume the receiving end handles schema changes

- Fax-to-PDF services introduce compression artifacts that OCR struggles to resolve

Classification models assign document type before extraction runs. Misclassification at this stage cascades through every subsequent step. An invoice classified as a purchase order extracts the wrong fields, passes validation against the wrong rules, and routes to the wrong downstream system. The error isn't recoverable without reprocessing from scratch.

Classification confidence scores are rarely surfaced to operators. Low-confidence assignments proceed as if they were certain, and downstream failures get attributed to extraction rather than classification. This makes the root cause invisible in most logging architectures.

Extraction Models and Their Tolerance for Real-World Variation

Named entity recognition, field-level extraction, and table parsing each carry different failure modes. An extraction model that handles standard invoices well can fail completely on a credit note with the same fields arranged differently. The model was trained on one layout; the credit note presents a different layout; the result is a confident but wrong extraction.

Extraction output is probabilistic. Most pipelines treat it as deterministic. That mismatch is where data quality problems begin to accumulate silently. The pipeline logs a success because no error was thrown. The extracted value is wrong, but within the confidence threshold. Months later, a finance audit finds recurring line-item errors that trace back to a specific supplier whose invoice format changed.

Schema drift compounds extraction errors in exactly this way. A supplier who changes their invoice template mid-year produces months of incorrect line-item data before anyone notices the extraction model is reading the wrong field positions. The pipeline never fails, it just extracts the wrong numbers with high confidence.

Validation Logic and the Cost of Skipping It

Post-extraction validation checks whether extracted values are internally consistent and match known business rules. Most pipelines implement minimal validation because building rules is slow and maintaining them as business logic evolves is slower.

Getting the threshold calibration right requires ongoing attention to extraction quality in production, not just in test environments. The trade-off is direct:

- Validation too strict: false positives flood manual review queues, which defeats the purpose

- Validation too loose: bad extractions enter downstream systems and surface only during payment failures, compliance audits, or customer complaints

Routing, Human Review, and the Re-Entry Problem

Documents that fail automated processing get routed to human review. This step is almost always modeled outside the execution pipeline, which means context is lost when a reviewer makes a correction.

When the document re-enters the pipeline after review, it typically starts from the beginning. The correction isn't recorded as a signal. The system doesn't know why the document went to review, what the reviewer changed, or why. The same document type will fail the same way next time, because the review process had no mechanism to feed the correction back into the extraction behavior.

Human review that exists outside the execution model is a dead end, not a feedback loop. It absorbs labor, fixes individual documents, and leaves the underlying problem intact. These component-level problems compound into recognizable failure patterns that show up across almost every enterprise ADP deployment. The next section covers those patterns, because knowing them is half the diagnosis.

Common Failure Modes in Enterprise ADP Deployments

The failure modes below aren't edge cases. They show up in most deployments at scale, and they share a common thread; they're invisible until something downstream breaks badly enough to prompt an investigation.

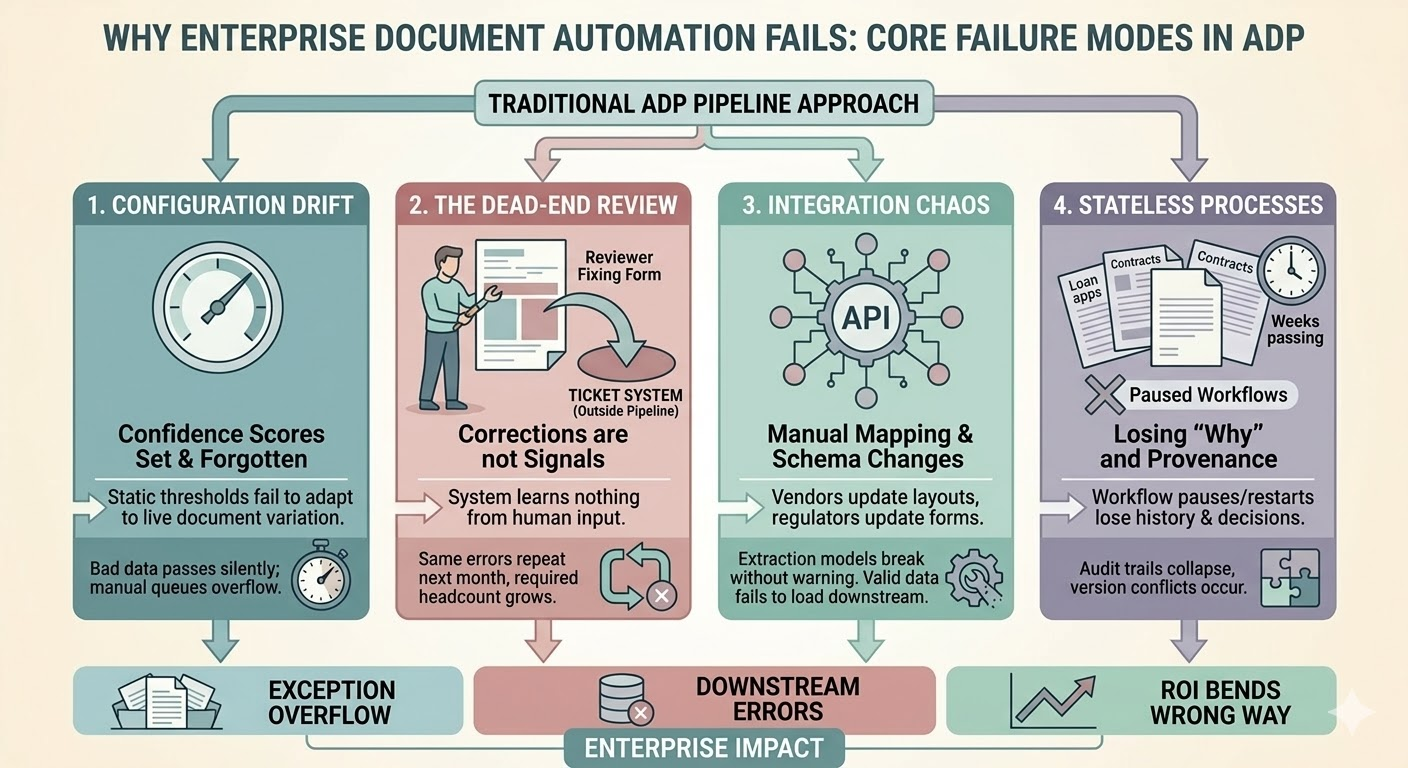

Confidence Score Thresholds Set Once and Never Revisited

Teams configure extraction confidence thresholds at deployment and rarely change them. Document quality drifts over time, new document types arrive, and thresholds calibrated on 10,000 training documents stop reflecting reality at 500,000 production documents. The calibration problem compounds as the document mix changes.

Thresholds set too high increase manual review volume. Thresholds set too low let bad extractions pass automatically. Neither outcome is acceptable at scale, and neither team systematically monitors the drift. The problem surfaces when exception queue volume becomes unmistakable.

Exception Volumes That Grow Until They Exceed Capacity

ADP is sold on exception reduction, and exceptions grow instead, because the system routes anything uncertain to humans rather than resolving ambiguity within the execution context. As document variety increases, so does the proportion of documents that hit uncertainty thresholds.

An OpenText study of supply chain document processing found that nearly 80% of manufacturers experience greater than 1% exception rates on automated transactions, with 20% experiencing rates above 5%. At those volumes, exception queues grow. Teams add headcount. The automation ROI calculation gets revised. This is the most common outcome in large-scale ADP deployments, and it's rarely discussed openly in vendor case studies.

Multi-System Downstream Actions That Assume Clean Inputs

ERP entries, payment approvals, and compliance filings all assume upstream document data is correct. When it isn't, the failure often occurs two or three systems downstream from where the extraction error originated. The ERP records the wrong GL code. The payment goes to the wrong entity. The compliance report doesn't match the source document.

Tracing that failure back to a document processing step requires logs that most ADP platforms don't keep in a usable form. The document processing system logged a success. The failure belongs to no one's monitoring dashboard. The investigation becomes a manual archaeology project across system logs that weren't designed to cross-reference.

Regulatory Documents That Change Without Notice

Government forms, tax documents, and compliance filings change their layouts and field requirements on regulatory cycles. ADP pipelines that rely on template-based extraction break when forms change, and there's often no alert that the break occurred. Processing continues. Confidence scores don't change. The wrong fields populate for the new form version.

Healthcare and financial services experience this acutely. A tax form version change can corrupt an entire quarter's filings before anyone catches that the extraction model is mapping the old field positions to the new layout. The business impact is proportional to how long the wrong version ran before detection.

These failure modes get worse when the workflow is long-running, spans multiple parties, or requires human approval. Those scenarios are where most ADP pipelines simply weren't designed to operate.

Long-Running Document Workflows as a Stress Test for ADP Pipelines

Vendor examples often focus on near-instantaneous data transfers. Real enterprise document workflows span days or weeks and include approvals, corrections, negotiations, and external dependencies. These workflows expose every architectural shortcut in the pipeline.

Contracts, Claims, and Loan Processing: Where Time Is the Variable

Vendor marketing examples tend to focus on near-instant data transfers. Real enterprise workflows span days or weeks and include approvals, corrections, negotiations, and external dependencies. A mortgage application touches underwriting, title, appraisal, compliance review, and final approval over a period of weeks. A contract goes through redlines from multiple parties before reaching final execution.

These workflows require pause, resume, rollback, and audit capabilities. Treating them as stateless jobs means losing context every time the process pauses. When it resumes, it restarts without knowing what happened before, what human decisions were made, or why certain document versions were rejected. The automation becomes a series of disconnected extraction events rather than a coherent process.

Multi-Party Documents Where Versions Conflict

Contracts that go through negotiation cycles produce multiple versions. ADP systems that don't track version provenance extract from whichever version they receive, with no mechanism to detect that a newer version supersedes it. In legal and procurement contexts, acting on the wrong version has direct liability implications.

The version tracking problem is also a timing problem. Documents arrive out of sequence. A revised purchase order arrives after the original has already been processed. The system has no way to know the first version is now invalid. Both versions generate extraction events. Both route to downstream systems. The conflict surfaces later, manually.

Approval Chains That Break the Execution Model

When a document requires human approval mid-process, the pipeline stalls. If the approver requests changes, the document re-enters the pipeline without any record of why the previous version was rejected. The context that the approver had, the specific objection, the requested modification, exists only in an email thread or a verbal conversation. The pipeline sees a new document submission with no history.

This creates audit gaps that compliance teams discover during reviews rather than during normal operations. The question 'why was this version approved after two rejections?' has no answer in the system logs. The answer lives in someone's inbox.

Structural Limitations of Traditional ADP Architectures

The failure modes described above aren't independent problems. They trace back to three architectural decisions that most ADP platforms share, decisions that made sense when documents were simpler and workflows were shorter.

Pipeline Design That Can't Adapt to Document Variability

Fixed pipelines assume documents are consistent enough that the same sequence of steps applies to all of them. Real document environments break this assumption constantly. The response is usually to add exception handlers for each variation, which extends the pipeline until it becomes unmaintainable.

Teams end up with branching logic that no one fully understands, maintained by whoever was present when each branch was added, and tested only against the cases that prompted its creation. New document variations trigger new branches. The pipeline grows; its behavior becomes harder to predict and harder to audit.

Extraction-Centric Design That Ignores Execution Context

Most ADP platforms are built around making extraction better. The execution layer, what happens with the extracted data, how failures are handled, how humans re-enter the process, is treated as the responsibility of a separate workflow tool. This split means the document platform handles ingestion and extraction while a separate system handles routing and action.

The integration between them is usually brittle. Data passes through an API or file export. The workflow tool picks it up. If the document platform retries, the workflow tool may receive the same data twice and create duplicate records. If the document platform fails silently, the workflow tool never receives the data and the process stalls with no alert. The gap between the two systems is where failures hide.

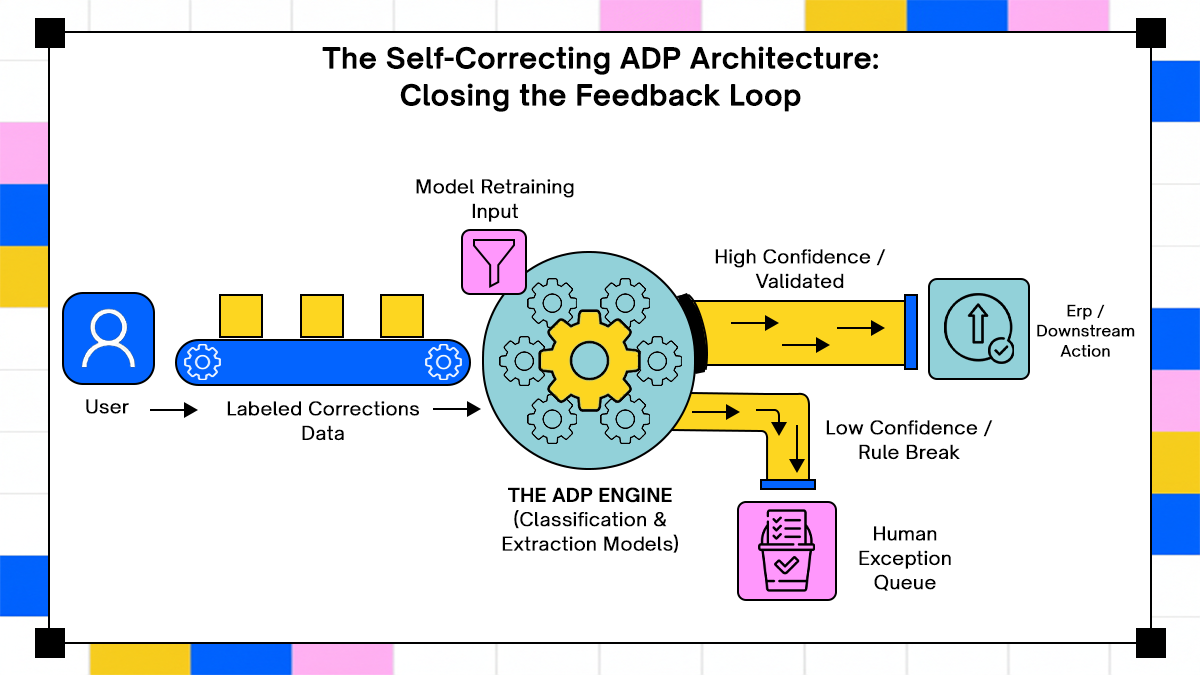

Feedback Loops That Don't Close

When a human reviewer fixes an extraction error, that correction rarely reaches the model that made the error. The system doesn't learn from its mistakes because there's no mechanism connecting reviewer actions to model behavior. The reviewer closes the ticket. The document goes to its downstream destination. The model that caused the error continues to produce the same error on similar documents.

Over time, the same document types fail in the same ways. The manual review queue reflects accumulated model debt that was never paid down. The headcount required to staff that queue grows proportionally with document volume. The automation efficiency curve bends the wrong direction.

These are the problems that a different execution architecture has to solve. The next section covers how Adopt approaches document processing differently, based on what their platform actually does.

How Adopt Approaches Automated Document Processing

The capabilities described here are drawn directly from Adopt's public product pages, including their product launch announcement, their open-source agent stack release, and their trust and security documentation.

Execution as the Primary Problem, Not Extraction

Most ADP platforms are built extraction-first: improve the model, increase accuracy, reduce the exception rate. Adopt's architecture treats document processing as an execution problem. The question isn't only whether the extraction was correct; it's whether the downstream action was correct, and whether the system can detect when it wasn't.

Adopt's platform is built around what they call verified execution: agents validate outcomes and recover when systems change, rather than assuming a successful extraction means a successful process. This is a meaningful distinction in document-heavy workflows, where an extraction can be technically correct but still drive the wrong action because the downstream system context wasn't accounted for.

Zero-Shot API Discovery and Automated Action Generation

A persistent problem in enterprise document workflows is that the systems consuming document data change faster than the integrations connecting them. An ERP upgrade changes field names. A procurement portal changes its submission API. The ADP pipeline, built against the old API, continues sending data to the wrong endpoints until someone manually identifies the break.

Adopt addresses this with Zero-Shot API Discovery (ZAPI), which auto-generates tool cards from APIs without requiring manual schema creation or reverse engineering. According to Adopt's December 2025 open-source release, ZAPI makes APIs immediately interpretable by LLMs, removing the maintenance burden of keeping integration definitions current as underlying APIs change.

The same launch introduced an Agent Orchestrator that routes requests to the right agent, action, or tool based on user intent, and automated conversation evaluations to ensure agents behave consistently as code changes. Together, these address the feedback loop problem: the system knows whether the action succeeded, and it can evaluate whether the agent is behaving as expected over time.

Real Workflow: How an Enterprise Invoicing Team Would Use This

The setup

An accounts payable team processes 8,000 invoices per month from 400 vendors. Vendor invoice layouts differ. The ERP schema evolves. Three approvers handle non-standard invoices, each with different authority limits.

What the workflow looks like with Adopt:

- An invoice arrives via email. The agent ingests it and classifies it against the current document taxonomy, not a static template.

- ZAPI reads the current ERP API spec. No manual mapping needed. If the ERP was updated last week, the agent reads the current field structure before attempting to write.

- The agent extracts vendor name, invoice number, line items, and payment terms. It then validates the extracted values against ERP vendor master data in real time.

- If the invoice amount exceeds a configured threshold, the agent routes it to the appropriate approver based on authority limit rules, preserving full extraction context in the review interface.

- The approver sees exactly what the agent extracted, what it validated, and why the invoice was flagged. They approve, reject, or correct with full context visible.

- The approved invoice writes to the ERP. The agent verifies the write succeeded against the actual system response, not just the API return code. If the ERP rejects the entry, the agent surfaces the specific rejection reason rather than logging a generic failure.

- The interaction, including what the approver changed and why, is logged with scope and outcome. The same invoice type next month benefits from that correction.

The key difference from a standard ADP pipeline is step 6; the agent verifies the downstream action, not just the extraction. The pipeline doesn't stop at 'data was sent.' It confirms 'data was accepted and written correctly.'

Dynamic Action Orchestration for Non-Standard Documents

Fixed pipelines fail on documents that don't match the expected structure. Adopt's Dynamic Action Orchestration composes and executes actions in real-time using whatever tools are available, even when no predefined, grounded action applies directly. This means the system doesn't stall when it encounters a document variant outside its training distribution. It reasons through available capabilities and assembles the appropriate response.

For document workflows specifically, this matters most in long-running processes where mid-workflow exceptions are routine. The system can adapt to the current state of the document and the downstream system rather than requiring a predefined branch for every scenario that could arise.

Human Review as a First-Class Execution State

Adopt's Agent Builder includes built-in approval workflows with version controls and RBAC-managed publishing rights. When a document requires human intervention, the execution context is preserved across the interruption. Reviewers can see what the agent attempted, why processing paused, and what decision they're being asked to make.

When processing resumes after review, the agent carries the reviewer's decision forward as context rather than restarting from the beginning. This addresses the re-entry problem directly: the workflow knows what happened before the pause, and it doesn't repeat work that was already validated by a human.

Security, Governance, and Deployment Flexibility

Adopt holds SOC 2 Type 2 certification covering all five Trust Services Criteria (Security, Availability, Processing Integrity, Confidentiality, and Privacy), as well as ISO/IEC 27001 certification. RBAC is available at the platform level, and Adopt's support team requires explicit client approval before accessing customer data.

For regulated industries with strict data residency requirements, Adopt offers VPC deployment via Helm. All execution and sensitive data handling can stay within the customer's own infrastructure. Audit trails live inside the customer's environment rather than the vendor's, which simplifies compliance demonstrations for frameworks like GDPR, HIPAA, and SOC 2.

Every agent action is logged with scope, intent, and outcome. Compliance teams can trace a document from ingestion to final system entry without reconstructing events from disparate logs across disconnected systems.

Conclusion

Automated document processing fails in predictable ways. Confidence thresholds get set once and never revisited. Exception queues grow faster than the automation meant to shrink them. Extraction errors surface three systems downstream, in the wrong GL code or a failed payment. Long-running workflows lose state at every human touchpoint. Regulatory form changes corrupt entire quarters of data before anyone catches them.

The common thread across all of these failures is the same; document processing was scoped as an extraction project and delivered as a pipeline, when the real problem is an execution problem. Getting the text out of a document is a solved problem. Getting the right action to happen in the right downstream system, correctly, every time, including when the document is unusual and including when a human has to intervene, is not.

Fixing this requires treating the full six stages of ADP, ingestion, classification, extraction, validation, routing, and downstream action, as a single execution problem rather than a data transfer problem. It requires human review that's built into the execution model, not bolted on outside it. And it requires feedback loops that close, corrections that reach the models that caused the errors.

Adopt's execution-first architecture addresses these problems directly, through verified downstream action, zero-shot API discovery that removes schema maintenance overhead, and human-in-the-loop design that preserves context across interruptions. Whether you're evaluating ADP architecture from scratch or diagnosing why your current implementation keeps generating exceptions, those three properties are the right ones to evaluate any platform against.

Frequently Asked Questions

1. When does automated document processing require a stateful execution model rather than a stateless pipeline?

Any workflow that can pause, be corrected, or require approval before proceeding needs stateful execution. Stateless pipelines lose context at every interruption, so human corrections don't carry forward. Loan processing, contract review, insurance claims, and regulatory submissions all require it by default.

2. How should teams measure extraction quality in production rather than in test environments?

Track the rate at which extracted values are overridden by downstream system constraints, rejected by validation rules, or corrected during human review. Also monitor confidence score distributions over time. Drift in average confidence without corresponding changes in exception rates usually signals the model is becoming overconfident on out-of-distribution inputs.

3. What's the right way to structure human review so it feeds back into processing quality over time?

Reviewer corrections need to be captured as labeled data, recorded against the specific extraction output that prompted them. Without that linkage, the correction has no path back to the model. With it, you have a continuous stream of production labels that can inform retraining and threshold adjustment.

4. How do regulated industries handle document processing in environments with strict data residency requirements?

VPC deployment keeps execution and sensitive data within the customer's own infrastructure, meeting cross-border transfer restrictions and data residency requirements at the architecture level. The compliance audit trail lives in the customer's environment, which simplifies demonstrations to auditors for GDPR, HIPAA, and SOC 2 frameworks.

Browse Similar Articles

Accelerate Your Agent Roadmap

Adopt gives you the complete infrastructure layer to build, test, deploy and monitor your app’s agents — all in one platform.

.svg)

.svg)