Here's everything you need to learn about AI Knowledge Bases or LLM Knowledge Bases, the challenges, solutions, platforms and more.

Every agentic code editor starts from scratch. It has no memory, no context, and no understanding of your architecture.

Karpathy built LLM wikis. Graphify created context graphs. Entire.io saves session transcripts. Developers draw architectural diagrams and give them to agents before each task.

All of these tools aim to solve the same problem - Agents need knowledge that lasts.

But none of them have fully solved it.

This is the main reason why code reviews have turned into design reviews. Even though developers move quickly with tools like Cursor and Claude Code, PR requests are rejected. This is not because the code is broken, but because it doesn’t fit your system. The cycle is reject, rewrite, reject, rewrite. It’s an iteration without alignment.

The agent didn’t break your architecture on purpose. It simply never knew your architecture was there.

This is the knowledge crisis in agentic development. Agents write code faster than humans can review it, but every agent often starts from zero.

3 Major Challenges Due to Missing Context

Every coding session is a blank slate.

Your agent reads the files. Figures out the context. Writes code. Session ends.

Next time? It reads everything again.

1. Token Costs Spiral Out of Control

If your repo has 200 files, the agent re-reads all 200 for every query. With Claude Sonnet 4.6 costing $3 per million input tokens and each developer making 5 queries a day, that adds up to 8.25 million tokens per developer per month.

With 40 developers, you’re spending $990 a month just to load context. The agent needed only three files, but read all 200.

Increasing the context window made things worse. Larger windows mean agents load even more irrelevant content. Response times doubled from 2 to 4 seconds.

Caching in Cursor and Claude helps, but only for a while. If there’s no activity for five minutes, the cache expires. When developers take a break, the cache is gone, so the next query reloads everything.

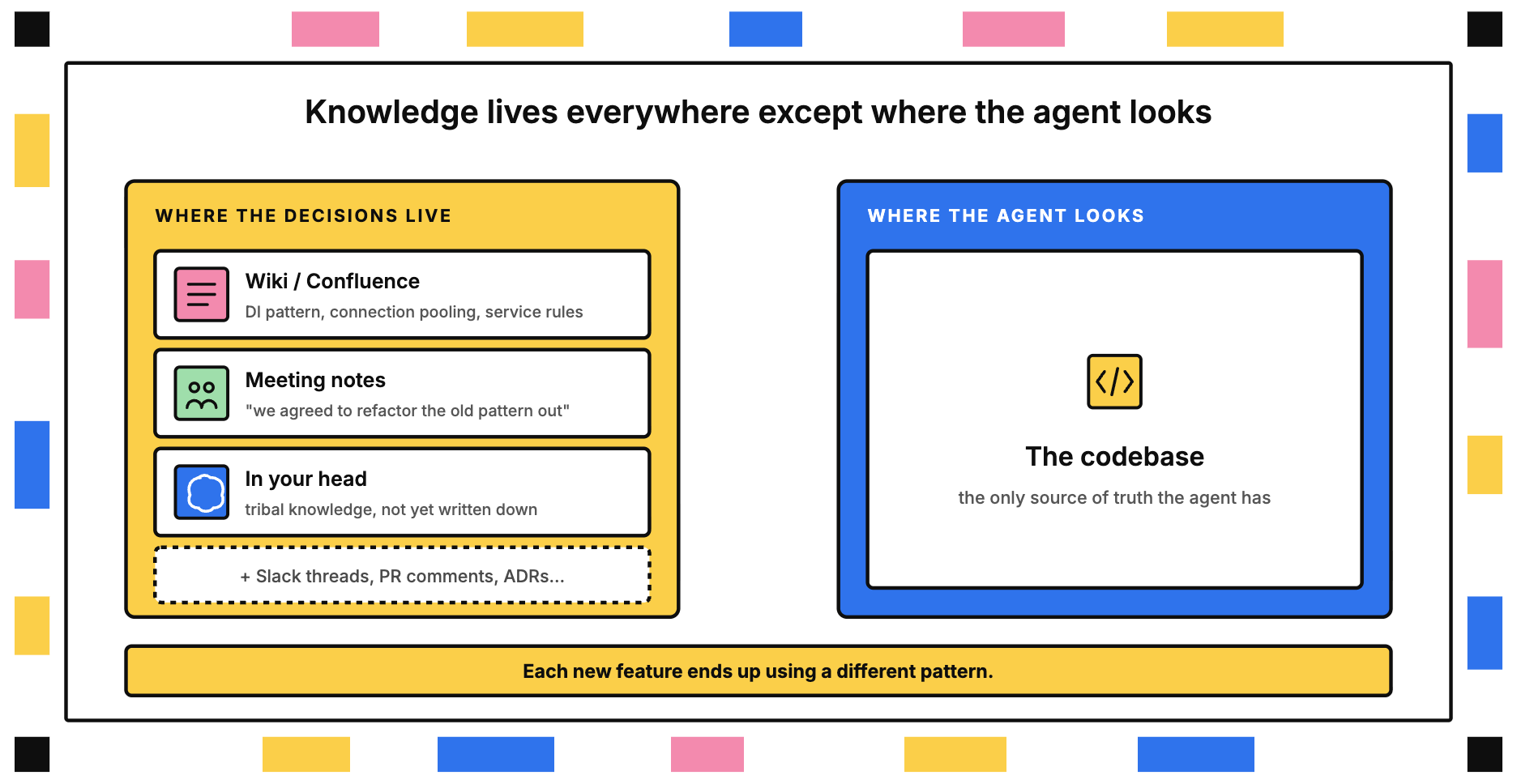

2. Architectural Decisions Evaporate

You chose a pattern for creating services, used dependency injection for testing, and set up connection pooling for database calls.

You documented these decisions, added them to your wiki, and shared them with the team.

But the challenge is that the agent doesn’t check your wiki. It only looks at the codebase, which still has examples of all three patterns because you refactored over time. The agent just picks the most common pattern, not realizing some of it is legacy code you’re trying to replace.

Your architecture lives in meeting notes, in your head, and in the wiki.

But it’s missing from the one place the agent checks - the code itself.

Each new feature ends up using a different pattern.

3. Code Reviews Become Design Reviews

Before agentic code editors, code reviews took about 15 minutes. You’d check the logic, tests, and style.

Now, reviews take 45 minutes. You have to check architecture, patterns, and standards, plus go through two or three rounds of revisions.

The review queue keeps growing, developers are left waiting, and your team’s velocity drops.

You’re left with two choices - approve PRs without a deep review and add architectural debt, or enforce standards and slow everything down.

Neither option is a win.

All three of these struggles share the same root cause - agents work without any architectural memory. They see the code, but not the reasoning behind it.

The bottleneck has shifted. Writing code isn’t the problem anymore—now, the real challenge is preserving architectural knowledge.

Why Traditional Solutions Break Down

While there are many ways to solve the above problem, they have some of the other limitations -

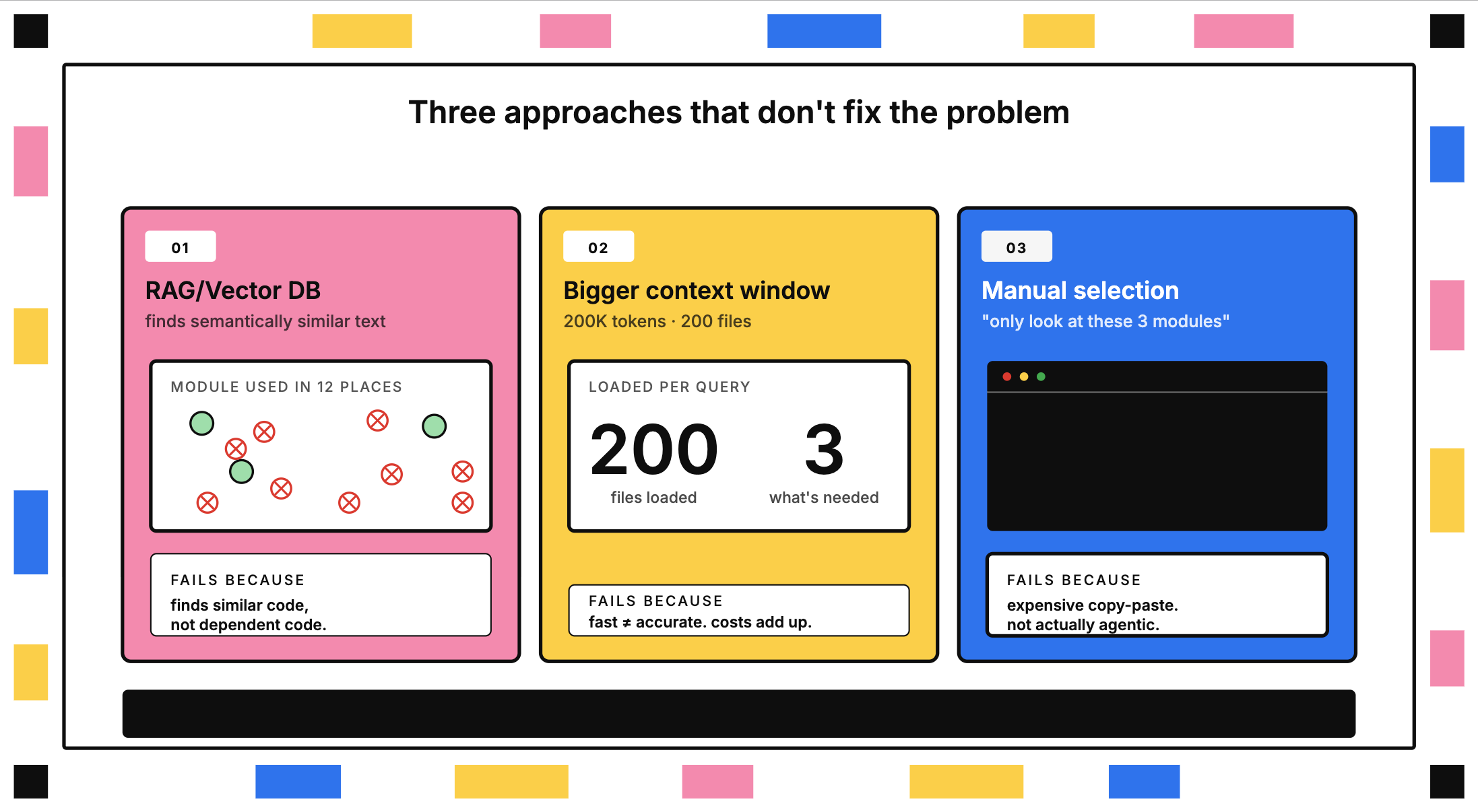

#1. RAG is not effective for working with codebases

Vector databases were designed for documents where finding semantically similar content is important. For example, matching similar text works well for support tickets.

However, this approach does not work for code dependencies. If you update a module that is used in twelve different places, a vector search will not find all twelve instances. It will only identify code that is semantically similar, not code that is structurally dependent.

Vector databases are ineffective when the required context spans multiple files, especially for complex features where changes in one module require updates across many places.

#2. Increasing the context window does not fix this problem

Claude Sonnet 4.6 can handle up to 200,000 tokens, which means you can now include 200 files instead of just 50.

However, you end up loading 200 files just to answer a question about three of them. This also increases latency, taking three to four seconds per query to process a million tokens. Fast results without accuracy can become costly and unhelpful.

#3. Manually selecting context goes against the intended purpose

Some developers choose which files the agent should read by hand, telling it to "only look at these three modules."

This approach is not true agentic development. It is more like an expensive form of copy-pasting. Instead of moving quickly, you now have to filter the context before every task.

What Technical Leaders Are Building for AI Knowledge Bases

Many leaders have already realized agentic code editors won't fix themselves. So they're building knowledge layers on top. There are five patterns emerging:

1. LLM Wiki by Karpathy

Instead of scattering knowledge across Notion, Google Docs, browser bookmarks, and sticky notes, you keep everything as structured markdown files. That’s the idea behind LLM Wiki.

The agent uses a curated knowledge base instead of raw files. The wiki becomes a lasting, growing resource. Each time you add a document, the LLM does more than just index it. It reads the content, pulls out key information, updates pages, revises summaries, flags contradictions, and improves cross-links.

Where it shines: Research-heavy projects. Teams are synthesizing information across papers, documentation, and meeting notes. Startups documenting tribal knowledge before it's lost.

What it doesn't solve: It doesn’t solve -

- Real-time agent coordination.

- Cross-file code dependencies.

- Structural relationships.

2. Graphify (link)

Rather than having to constantly rebuild your graph, you can simply use the SHA256 cache to only regenerate what has changed in your last session. Your queries will now use a compact representation of the structure instead of reading from the uncompiled source.

Not a wiki. A graph. Nodes are entities (classes, functions, concepts). Edges are relationships (calls, imports, dependencies).

Graphify runs in two passes, and this is where things get interesting. First, it extracts structure from code using AST parsing. This is deterministic and doesn't involve any AI. Then, AI agents process unstructured data such as documents, papers, and images to extract concepts and relationships.

Token reduction: The claim is 71.5× fewer tokens per query.

Where it shines: Multi-language codebases with heavy documentation. Repos where understanding "what connects to what" is harder than understanding individual files.

What it doesn't solve: It doesn’t solve for decision traceability.

3. Entire.io

Entire.io allows humans and agents to query not just the code but also the reasoning behind it.

Every Cursor or Claude Code session generates a transcript: prompts, reasoning, decisions. That disappears when the session ends.

Entire.io's checkpoints tool automatically captures AI agent context—reasoning, prompts, and decisions—on every Git commit.

While traditional Git tells you what changed, the reasoning behind those changes often evaporates once an AI's context window closes.

Where it shines: For teams running parallel agent sessions. Enterprises need audit trails for AI-generated code.

What it doesn't solve: It doesn’t address proactive architectural enforcement or the prevention of bad decisions before they ship.

4. Architectural Diagram & PR-Level Design Enforcement

- Architectural Diagrams as Agent Instructions

Manual but effective. Senior engineers write explicit architectural documents and add instructions like :

- "Utils live here. APIs live here. Interfaces live here."

- "Do not violate this pattern. Do not violate this pattern."

- Include examples from past implementations.

The agent gets this document in every task. It's pre-training for your specific codebase.

Where it shines: For teams with strong architectural opinions and codebases where consistency matters more than speed.

What it doesn't solve: It doesn’t keep diagrams in sync with code. Human effort scales linearly with codebase growth.

- PR-Level Design Enforcement

Don't accept every PR. If it violates architectural patterns, reject it. Ask for another iteration.

Coding isn't the bottleneck anymore. Alignment is. Let agents iterate fast. But teams need to enforce boundaries.

Where it shines: Teams with dedicated architects. Projects where tech debt costs more than iteration time.

What it doesn't solve: Burnout from constant PR rejection. Doesn't scale past 5-10 developers.

The Unifying Insight: All Five Solve the Same Problem

Agents are stateless. Your architecture isn't.

The abstract issue here is that these agentic code editors are really good at writing code. But they are terrible at making a judgment on what code to write X.

The logical extreme: Millions of dollars. Thousands of developer hours. The product fails in the market. Why? Because somewhere, someone made the wrong architectural decision—and the agents kept building on top of it.

These tools close the translation gap between:

- What your company needs

- What your agents are building

What's Still Missing

Each developer has their own agent code editor. But there's no shared session where architects and engineers align on an MD file, watch an LLM convert it into a slide deck or codebase, and give feedback in real time.

There are also no group-level collaborative alignment tools. We expect OpenAI, Anthropic, and Google to move soon. The pressure is on.

Final Thoughts

Agentic code editors made coding fast. The new bottleneck is knowledge preservation.

If your code reviews are design reviews, if your token bills are $500K/month, if your agents keep recreating modules - you don't need faster code generation.

You need architectural memory. You need a knowledge base that compounds. You need agents that understand not just what your code does, but why it exists.

The era of stateless agents is ending. The era of knowledge-driven agentic development is here.

Browse Similar Articles

Accelerate Your Agent Roadmap

Adopt gives you the complete infrastructure layer to build, test, deploy and monitor your app’s agents — all in one platform.

.svg)

.svg)